How ETUNC combines probabilistic inference, historical coherence, and constitutional alignment to reduce semantic drift in agentic systems.

INTRODUCTION

When Plausibility Is Not Enough

Modern AI systems can produce outputs that are syntactically fluent, semantically coherent, and statistically plausible — and still be wrong in ways that matter institutionally. A system can generate a recommendation that sounds reasonable, cites relevant precedents, and clears confidence thresholds, yet simultaneously bypass a compliance requirement, contradict an organizational mandate, or create accountability gaps that only surface after a consequential decision has been made.

This is not a failure of language modeling. It is a failure of governance architecture.

The paradigm shift now underway in enterprise AI is not from capability to more capability. It is from capability to governability. The question enterprises increasingly need to answer is not “Can this system produce useful output?” but “Can this system produce output that belongs inside our institutional meaning structure — traceable, plural in its review, and accountable at every step?”

ETUNC’s architectural thesis has always been grounded in three governing properties: Veracity, Plurality, and Accountability. These are not abstract values. They are structural requirements that must be operationalized at the inference layer if AI is to function as Judgment-Quality AI rather than high-velocity autocomplete.

The Resonance Model is ETUNC’s answer to that operationalization challenge. It proposes a post-inference governance scoring layer that evaluates not only whether a candidate output is probable, but whether it coheres with the institution’s constitution, historical reasoning, and accountability requirements. This post describes the model’s architecture, its mathematical structure, its practical thresholding logic, and its significance for enterprise governance.

CORE RESEARCH DISCOVERY

The Resonance Model: Architecture and Foundations

Source Document

Resonance Can Be Implemented as a Post-Inference Governance Scoring Layer

Origin:ETUNC Internal Research Development | 2026

Core Concept

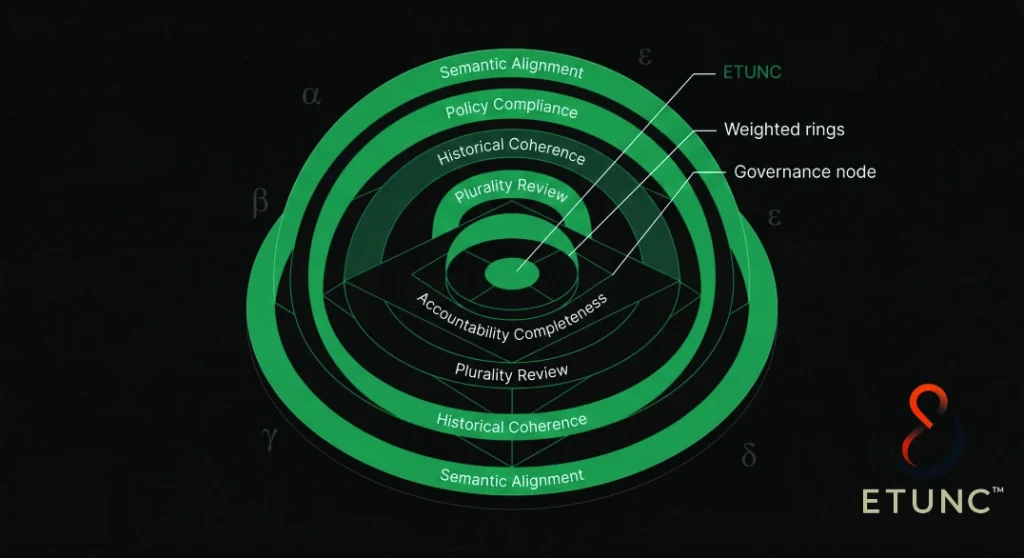

The Resonance Model proposes that AI governance cannot be reduced to a single confidence signal. Instead, every candidate output should be evaluated against a multi-dimensional scoring pipeline that combines semantic alignment with constitutional documents, explicit policy compliance, historical coherence with prior institutional decisions, plurality review across independent agents, and accountability completeness as measured by the presence or absence of full reasoning provenance.

The model’s governing equation expresses a governance-qualified decision score:

| D(S) = P(S | E) × R(S | C, H) Where P is probabilistic plausibility, R is constitutional resonance, and D is the governance-qualified decision score. |

Resonance itself is decomposed as:

| R = α(SA) + β(PC) + γ(HC) + δ(PR) + ε(AC) Semantic Alignment (SA) · Policy Compliance (PC) · Historical Coherence (HC) · Plurality Review (PR) · Accountability Completeness (AC) |

Why It Matters to ETUNC

This framework closes the gap between probabilistic inference and institutional legitimacy. High model confidence is necessary but not sufficient for enterprise deployment. The Resonance Model supplies the governance layer that confidence alone cannot.

VPA Alignment

- Veracity: The Semantic Alignment and Policy Compliance subscores together ensure that outputs are grounded in factual and constitutional reference material, not merely in statistical plausibility.

- Plurality: The Plurality Review subscore formalizes multi-agent cross-examination as a structural governance mechanism. Dissent is not failure — it is signal.

- Accountability: The Accountability Completeness subscore makes reasoning provenance a quantified governance requirement. Outputs that lack source citations, policy references, or audit trails receive lower scores regardless of semantic quality.

ETUNC Integration Points

- Guardian: The Guardian layer activates when Resonance scores fall in the mid-range (0.60 ≤ R < 0.85). Guardian responsibilities include inspecting the failed subscores, preparing an escalation packet for human review, and recommending whether to approve, reject, or revise the constitutional corpus. The Guardian does not re-answer the question — it governs the result.

- Resonator: The Resonance Scoring Engine is the Resonator in operational form — a scoring service that computes the five weighted subscores, applies threshold logic, and routes outputs to autonomous approval, Guardian review, or mandatory Human-in-the-Loop (HITL) escalation.

- Envoy: At the retrieval layer, the Envoy function assembles the evidence package (E) that feeds the scoring pipeline, drawing from constitutional documents, historical precedent corpora, and policy reference structures.

THEMATIC SYNTHESIS

Governance as a Scoring Problem

The Resonance Model arrives at a critical architectural inflection: governance cannot be retrofitted onto AI systems as a post-hoc audit trail. It must be embedded in the inference pipeline itself, operating at the moment of decision, before outputs become institutional actions.

What this model makes visible is that the transition from capability-oriented AI to governance-oriented AI requires a fundamental reconceptualization of what “score” means in enterprise deployment. Confidence scores measure statistical probability. Resonance scores measure institutional coherence. These are different quantities, and confusing them is a source of significant governance risk.

The model also clarifies the role of historical precedent in agentic systems. History is evidence, not destiny. The Historical Coherence subscore retrieves and weights prior institutional decisions, but the constitutional corpus always takes precedence when conflicts arise. This reflects a mature institutional logic: organizations evolve, and their AI systems must evolve with them through a living constitutional update loop, not through uncritical deference to precedent.

Perhaps most importantly, the model formalizes disagreement as a governance asset. The Plurality Review subscore is not optimized for consensus. It is optimized for the quality of cross-agent examination — including the surfacing of dissent. A system in which agents always agree is not more trustworthy. It is more brittle. Robust institutional AI requires structured mechanisms for disagreement to surface, be recorded, and trigger escalation when warranted.

The threshold logic that follows from the model — autonomous pass above 0.85, Guardian review between 0.60 and 0.85, mandatory HITL below 0.60 — operationalizes a principle that is architecturally significant: some decisions should never be rescued by favorable averages. Hard rule failures trigger mandatory human review regardless of composite score. This is not a constraint on efficiency. It is a constraint on illegitimate automation.

ACTIONABLE INSIGHTS

Architectural Directions: VPA Mapping

| Focus Area | Insight | ETUNC Direction |

| Veracity | Embeddings alone do not validate rule compliance — semantic closeness ≠ institutional correctness. | Resonance Scoring Engine scores semantic alignment as one weighted subscore (SA), not the governing signal. |

| Plurality | Multi-agent disagreement is information, not system failure; surfaced dissent strengthens governance legitimacy. | Plurality Review (PR) subscore captures cross-agent agreement quality, adjusted for independence and surfaced dissent. |

| Accountability | Auditability requires complete reasoning provenance — missing source citations or policy references compromise legitimacy. | Accountability Completeness (AC) subscore enforces traceability; any gap reduces the composite Resonance score. |

PUBLIC NARRATIVE RESONANCE

No influencer or public narrative inputs were provided for this research development. The source material is ETUNC’s internal architectural research.

CONCLUSION

Institutional Legitimacy as an Architectural Property

The Resonance Model represents a meaningful architectural advance in the theory of enterprise AI governance. It moves the field beyond confidence-only evaluation and toward a multi-dimensional scoring framework that operationalizes Veracity, Plurality, and Accountability at the inference layer.

What has changed in the AI landscape is not the pace of model capability development. What has changed is the institutional demand for AI systems whose outputs can be defended — traced, reviewed, and legitimately attributed within organizational governance structures. The Resonance Model is a direct architectural response to that demand.

ETUNC’s governing principle has always been that trustworthy AI is not a property of models alone. It is a property of the systems, structures, and processes within which models operate. The Resonance Scoring Engine is the formalization of that principle at the decision layer.

| ETUNC does not ask only whether an output can be generated. It asks whether that output deserves institutional legitimacy. |

SUGGESTED RESOURCE LINKS

A. ETUNC Insights (Internal)

Governance-First Enterprise Automation — James Wm. Frank, March 15, 2026 (etunc.ai/insights)

Governability as Architecture — James Wm. Frank, March 1, 2026 (etunc.ai/insights)

Governance as the Matrix — James Wm. Frank, March 16, 2026 (etunc.ai/insights)

B. Academic / Technical (External)

- Constitutional AI: Harmlessness from AI Feedback — Bai et al., Anthropic (2022). Foundational treatment of constitutional governance as a structural property of AI systems.

- Toolformer: Language Models Can Teach Themselves to Use Tools — Schick et al., Meta AI (2023). Relevant to Envoy and retrieval architecture within agentic pipelines.

- NIST AI Risk Management Framework (AI RMF 1.0) — National Institute of Standards and Technology (2023). Standards-body framework for auditability and accountability in AI deployment.

- ReAct: Synergizing Reasoning and Acting in Language Models — Yao et al. (2022). Foundational architecture for reasoning-integrated agentic systems.

CALL TO COLLABORATION

Shared Stewardship of Trustworthy AI

ETUNC exists at the intersection of AI architecture and institutional governance. The work of building judgment-quality AI systems is not the work of any single organization. It requires researchers, institutional architects, governance practitioners, and enterprise leaders to engage with these problems as shared stewards of a technology whose consequences extend well beyond any single deployment context.

If your organization is navigating the transition from capability-oriented to governance-oriented AI — or if you are engaged in research that bears on constitutional design, multi-agent accountability, or the structural conditions for trustworthy agentic systems — ETUNC welcomes that conversation.

Contact ETUNC:https://etunc.ai/contact-page/